Threads from different blocks cannot be synchronized or interact directly in any way. Threads from different warps, but within the same block can execute in any order, but can be forced to synchronize by the programmer. Threads within a single warp execute in a perfect sync, in SIMD fahsion. In addition, threads are organized into warps, each containing exactly 32 threads. These numbers can be checked at any time by any running thread and is the only way of distinguishing one thread from another. Function ponter calls, and virtual method calls, while supported in most newer devices, usually incur a major performance penality.Įach thread is identified by a block index blockIdx and thread index within the block threadIdx.

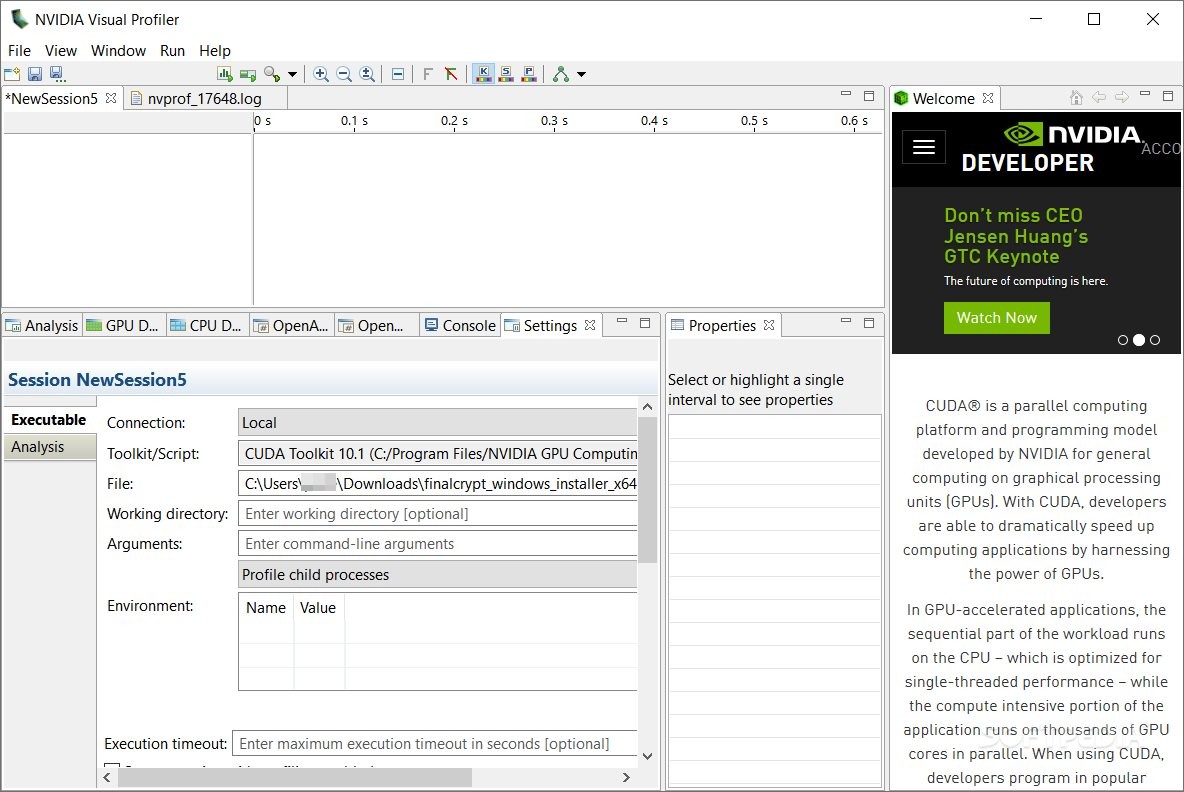

Because of their number, CUDA threads operate with a few registers assigned to them, and very short stack (preferably none at all!).įor that reason, CUDA compiler prefers to inline all function calls to flatten the kernel so that it contains only static jumps and loops. Threads are 'lightweight' with minimal context, allowing the hardware to quickly swap them in and out. the thread - a scalar sequence of instructions executed by a single CUDA core.On the other hand, if resources permit, more than one block may run on the same SM, but the programmer cannot benefit from that happening (except for the obvious performance boost). If there are too many blocks, some may execute sequentially after others. As such, blocks can communicate only through global memory.īlocks are not synchronized in any way. the block - is a semi-independent set of threads.It is specified as a one or two dimentional set of blocks the grid - represents all threads that are spawned upon kernel call.Kernel is invoked with a call configuration which specifies how many parallel threads are spawned. The physical structure of the GPU has direct influence on how kernels are executed on the device, and how one programs them in CUDA. This effectively causes the SM to operate in 32-wide SIMD mode. In addition, each SM features one or more warp schedulers.Įach scheduler dispatches a single instruction to several CUDA cores. Each core can handle a few threads executed concurrently in a quick succession (similar to hyperthreading in CPU). Their precise number depends on the architecture. CUDA core - a single scalar compute unit of a SM.Each SM operates nearly independently from another, using only global memory to communicate to each other. streamming multiprocessor (SM) - each chip contains up to ~100 SMs, depending on a model.the chip - the whole processor of the GPU.The CUDA-enabled GPU processor has the following physical structure: kernel - a function that resides on the device that can be invoked from the host code.A single host can support multiple devices. device - refers to a specific GPU that CUDA programs run in.host - refers to normal CPU-based hardware and normal programs that run in that environment.However, due to the architecture differences, most algorithms cannot be simply copy-pasted from plain C++ - they would run, but would be very slow. On the other hand, GPU is able to run several thousands of threads in parallel and even more concurrently (precise numbers depend on the actual GPU model).ĬUDA is a C++ dialect designed specifically for NVIDIA GPU architecture. GPUs are highly parallel machines capable of running thousands of lightweight threads in parallel.Įach GPU thread is usually slower in execution and their context is smaller. CUDA is a proprietary NVIDIA parallel computing technology and programming language for their GPUs.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed